originally posted on GitHub by evolv Consultant, Ben Jenkins

I wanted to write a blog to demonstrate the power of integrating dbt within Fivetran to load data into Snowflake. So, I thought about what data is freely available and decided that rather than one more analysis of pandemic data I’d prefer to focus on the upcoming baseball season.

Assumptions for running this tutorial

This post demonstrates how to create a data pipeline for using Fivetran, Snowflake, dbt and git. As such, you will need have the following to do this tutorial:

- A Snowflake account to use as a target data connection.

You can get a free trial here: Snowflake Signup - A Fivetran account.

You can get a 14 day trial here: Fivetran Signup - You will need dbt core installed locally.

Here are instructions for setting it up: dbt setup - You will need a git account of some sort.

You can sign up for a free github account: Github Signup

Play Ball

Baseball data background… “blah blah blog”

A quick Google search on free data helped me find this site: Baseball Archive Statistics

Where I downloaded the baseball databank from here: Baseball Databank

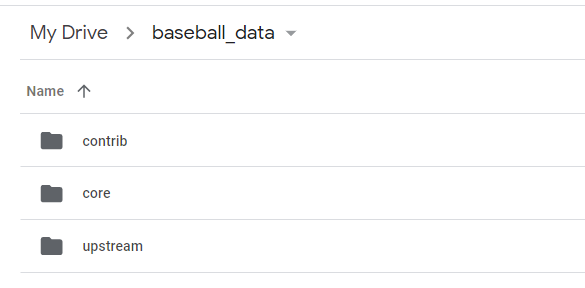

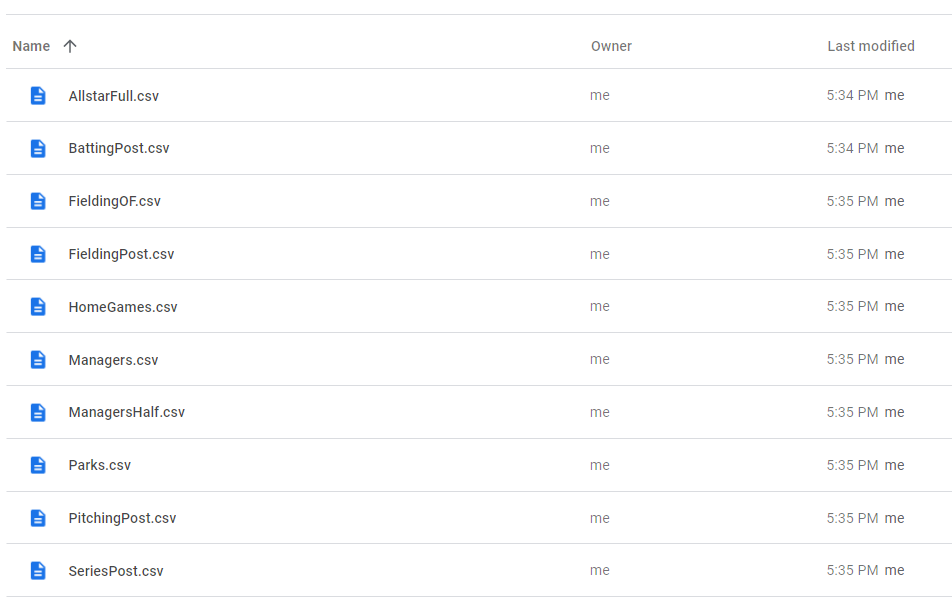

And extracted into separate google sheets:

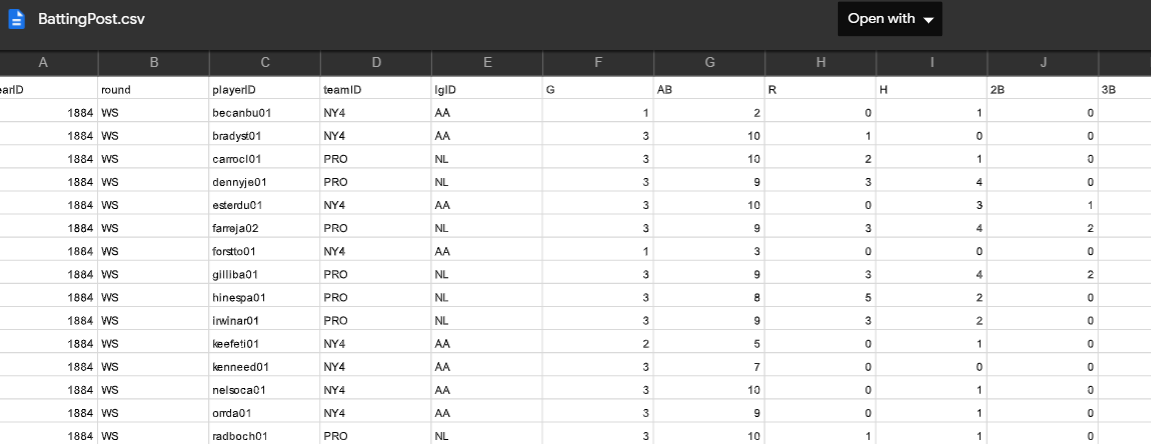

Sample of batting statistics:

And the data contains definitions in this readme.

readme:2.9 BattingPost table

yearID Year

round Level of playoffs

playerID Player ID code

teamID Team

lgID League

G Games

AB At Bats

R Runs

H Hits

2B Doubles

3B Triples

HR Homeruns

RBI Runs Batted In

SB Stolen Bases

CS Caught stealing

BB Base on Balls

SO Strikeouts

IBB Intentional walks

HBP Hit by pitch

SH Sacrifices

SF Sacrifice flies

GIDP Grounded into double plays

Batter up

We are going to create a table in Fivetran using the BattingPost google sheet loaded previously.

Adding your first connector (this step depends on having setup the Fivetran User with the appropriate privileges in Snowflake).

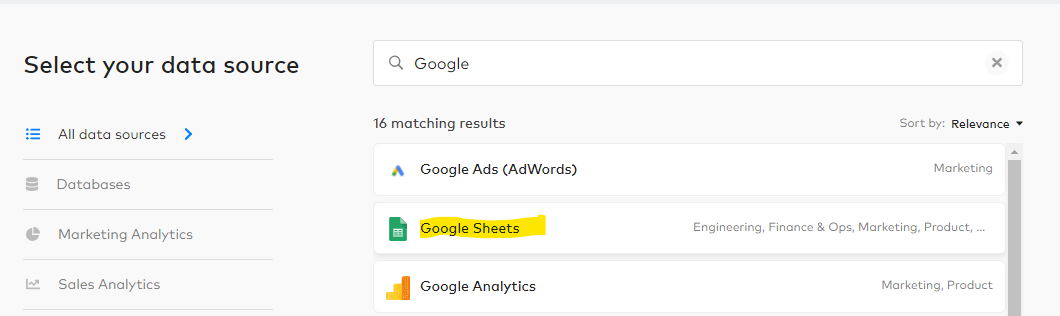

In Select your data source Search for Google and select Google Sheets. Click Continue Setup:

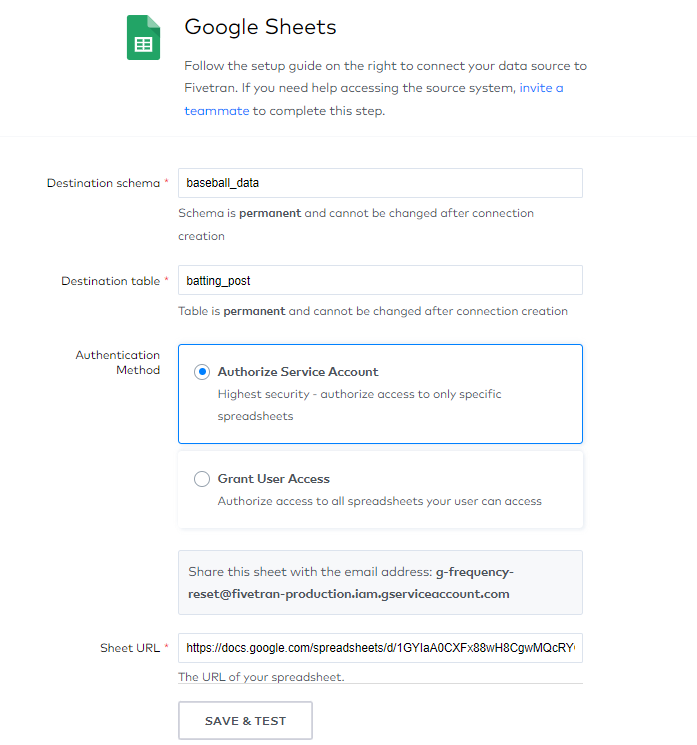

On the Google Sheets page enter the following:

Destination schema: `baseball_data`

Destination table: `batting_post`

Authentication Method: choose Authorize Service Account

Sheet URL: https://docs.google.com/spreadsheets/d/1GYIaA0CXFx88wH8CgwMQcRYCN8YxiX_-y8YTeN-VUg4/edit#gid=2089050719

You may have to scroll down to see the Find Sheet button. Click on it and you should find DATA in the Named Range drop down.

Then click ‘Save and Test’:

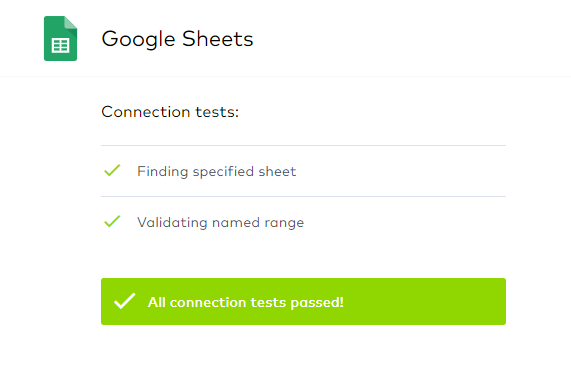

You should see this if everything is successful:

Click Select your data to continue.

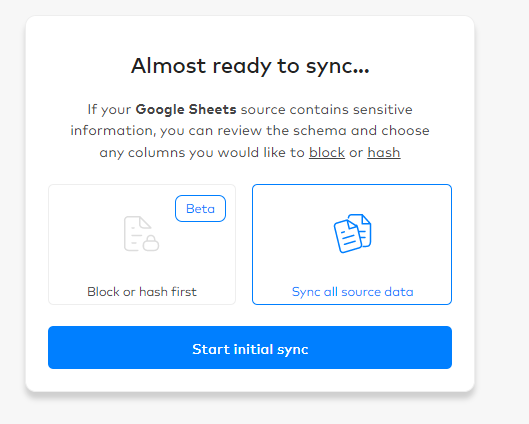

You should get a warning for starting to do the initial sync. As this is public data it is not a concern. But be mindful of your company’s data governance policies for your production environment. Click start initial sync:

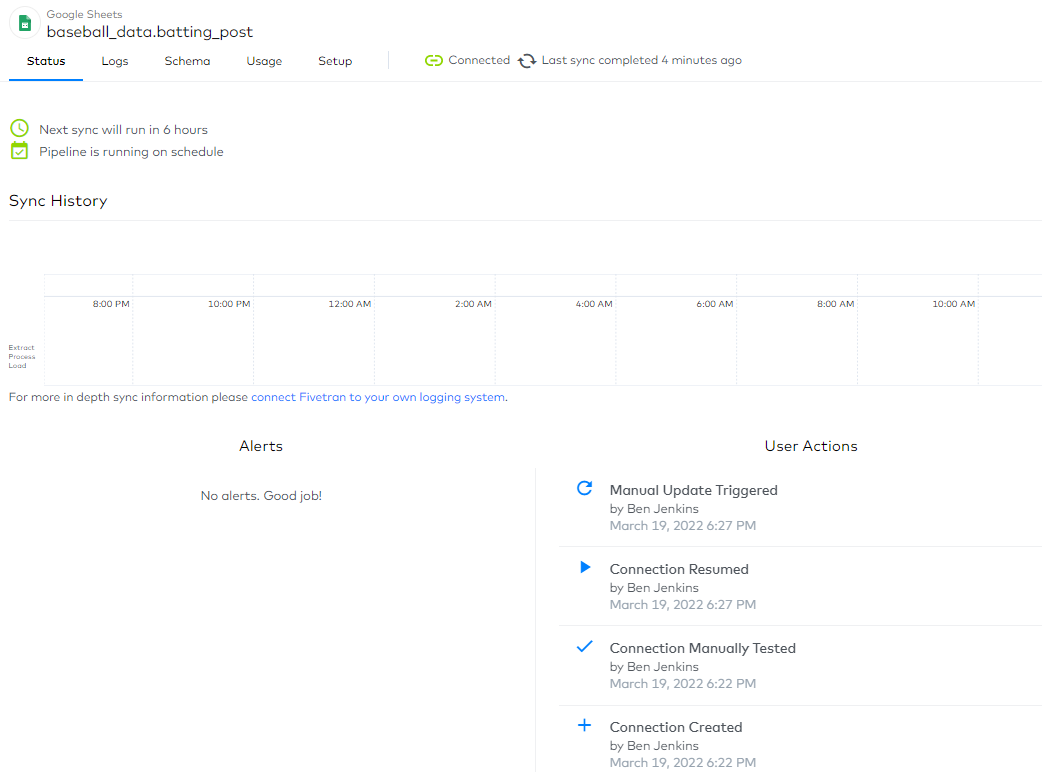

This launched the first sync and by default set the next one to 6 hours:

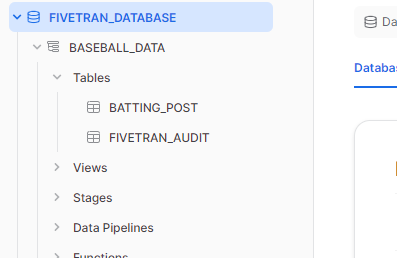

In Snowflake, we can see that the schema BASEBALL_DATA was created along with the BATTING_POST table and FIVETRAN_AUDIT table.

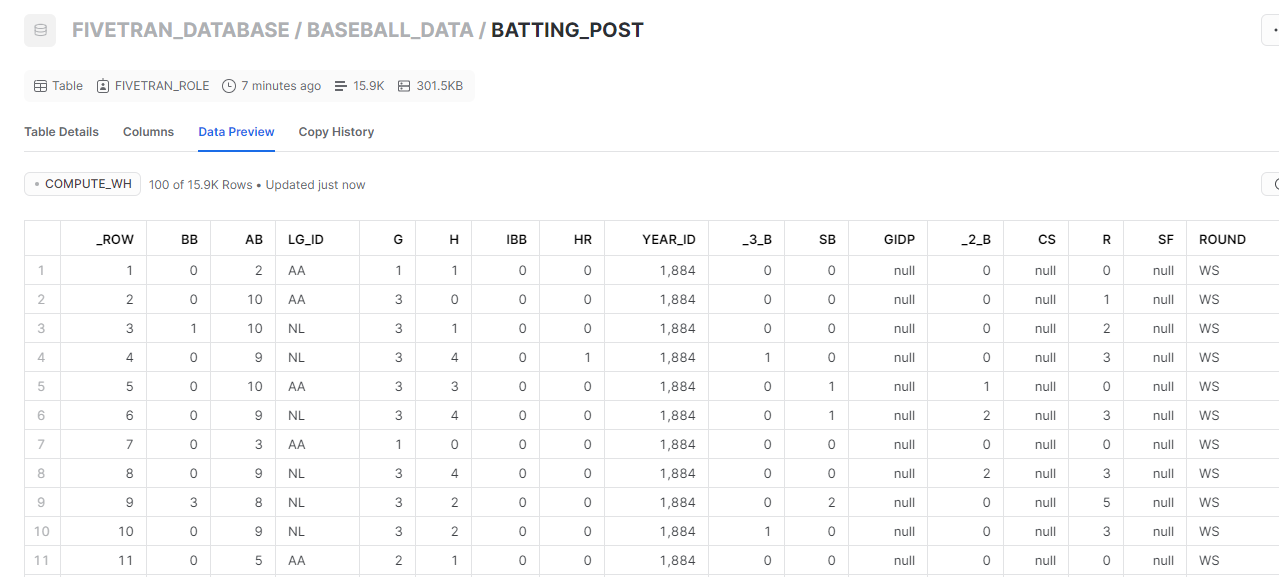

We can preview the data and see that it loaded as expected:

Those column names are ugly though. We could probably make them look better with human readable names.

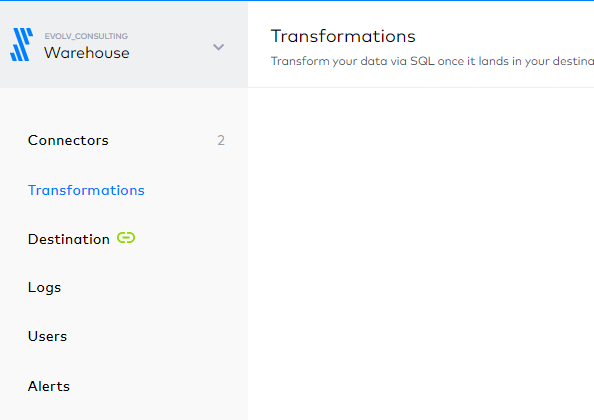

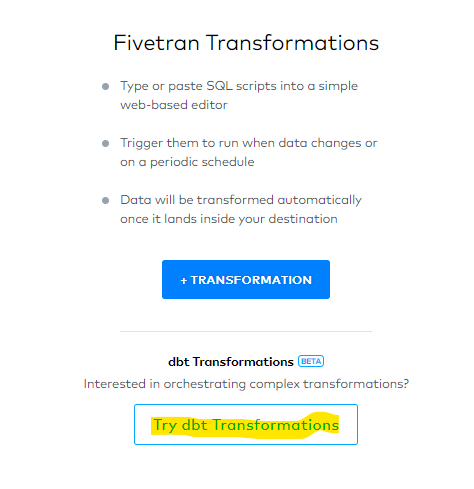

We can transform the data within Fivetran. And, we have choices. We can use Fivetran’s Transformations or we can use dbt from within Fivetran. First, from within Fivetran on the left hand navigation choose Transformations:

Then select Try dbt Transformations (you will authorize your github repo to be used by Fivetran)

Click to Enable dbt Transformations then you will be presented with this requirement to proceed:

I hadn’t setup a repo previously, so I chose Help me set up my dbt project.

This brings you to this page which is pretty self explanatory: Transformations for dbt Core Setup Guide

The notes I’ll add are here:

- From the prerequisites you’ll need to install dbt.

- I’ve found this helpful for setting the dbt grants in Snowflake.

- You can skip Step 1 and begin with Step 2 – Create a new repository.**I have a github account and am going to add a repo to it.

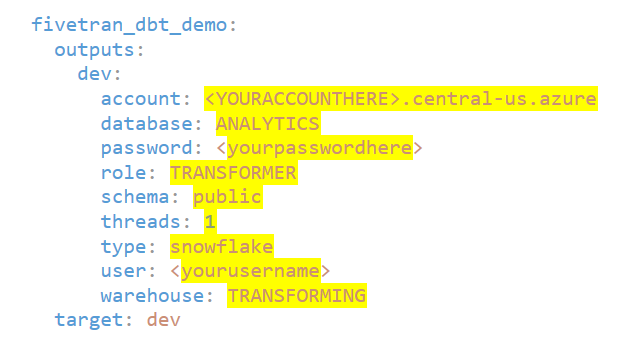

- Step 3 doesn’t mention adding the Snowflake database info. But, you’ll need to know info to setup your profile:

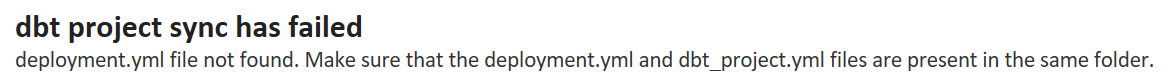

When I completed the Transformations for dbt Core Setup Guide I got the following error:

dbt project sync has failed deployment.yml file not found. Make sure that the deployment.yml and dbt_project.yml files are present in the same folder.

I didn’t see in setup or pre-requisites an example of adding the deployments.yml file. Here is a link to a sample from Fivetran.

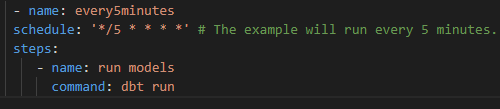

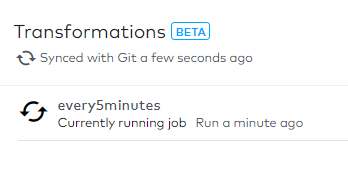

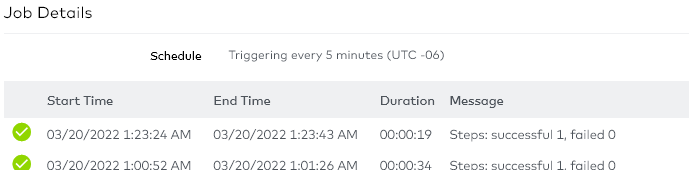

I chose to create a job that runs every 5 minutes while developing and unit testing and then change the schedule after that to less frequent:

With this being setup dbt has a job that will rebuild the existing models that are setup in the project:

But, we haven’t started using dbt to work on the baseball data.We’ll start transforming our BATTING_POST data.

Letting dbeaver generate and format a select statement gives a query that is not nice to look at:

SELECT

"_ROW",

BB,

AB,

LG_ID,

G,

H,

IBB,

HR,

YEAR_ID,

"_3_B",

SB,

GIDP,

"_2_B",

CS,

R,

SF,

ROUND,

SH,

TEAM_ID,

HBP,

RBI,

SO,

PLAYER_ID,

"_FIVETRAN_SYNCED"

FROM

FIVETRAN_DATABASE.BASEBALL_DATA.BATTING_POST;

This should only persist in a raw area. Let’s create a model to clean it up.

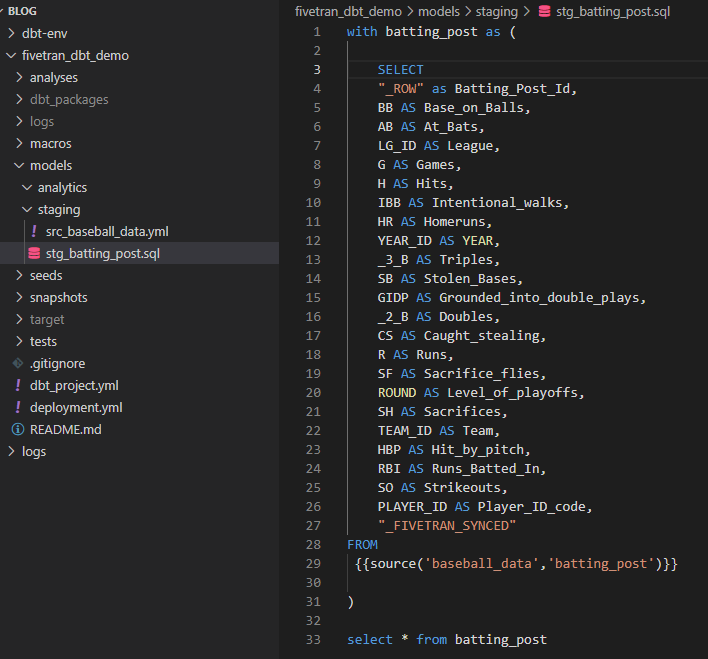

Using the data dictionary we can produce a better looking query to use in our staging model.

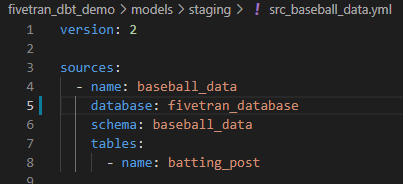

And along with the new sources file src_baseball_data.yml.

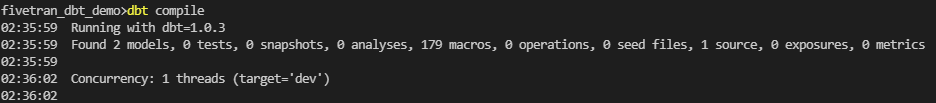

We should be ready to use dbt compile to execute our code.

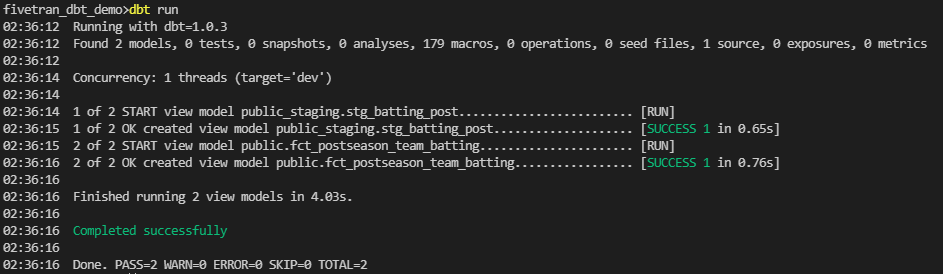

Dbt run completes successfully:

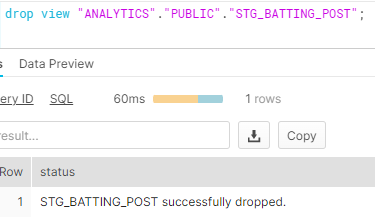

And in Snowflake this shows us that we have human readable data. But, we haven’t shown that Fivetran added the data. With successful runs of the same data, I added time to the job. Now, I want to make sure that Fivetran is executing the run and not my command line. So, I drop the view.

And update the schedule to run on the 5’s again.

It completes successfully:

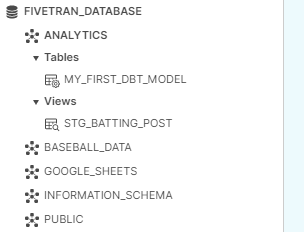

And the view has been rebuilt by dbt.

Summary

In this tutorial we’ve just begun to see how Fivetran can be integrated to use dbt within its pipelines.

Evolv consulting has experts in each of the technologies discussed here as well as many others to assist you in building out your modern Cloud Data Solutions. Contact us today for assistance.